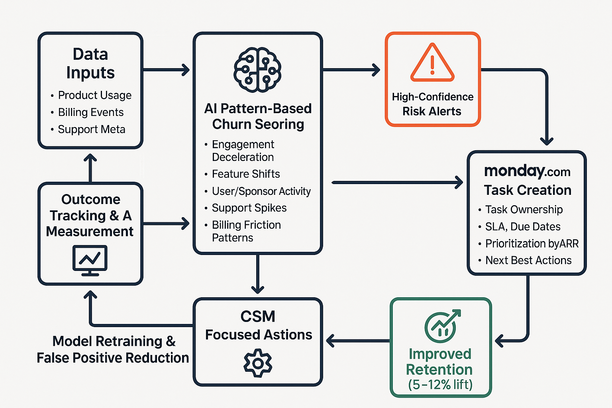

Introduction: Navigating Churn with AI-Driven Pattern-Based Scoring

I’ve learned the hard way that churn isn’t random—it’s patterned. In several retention turnarounds, the difference between clean forecasts and last‑minute fire drills came from reading those patterns early and making them actionable. The teams that piped artificial intelligence (AI) pattern scores into where work already happens saw fewer surprises, cleaner accountability, and a measurable retention lift months before renewal.

This post breaks down how pattern-based churn scoring works, the silent signals that matter, and how to operationalize risk alerts directly inside Monday.com to focus customer success managers (CSMs) on the few actions that move the needle. Along the way, I’ll point you to the Lyaxis field notes for checklists and templates you can copy, plus an optional Monday.com board layout to get started fast.

Understanding Pattern-Based Churn Scoring: Uncovering Hidden Renewal Risks

Pattern-based churn scoring reads behavior sequences across product, billing, and support to flag risk months earlier—often when traditional “health scores” still look fine. Instead of snapshots, it evaluates changes and combinations: what shifted, in what order, and how fast.

- Engagement deceleration + seat contraction after admin turnover: A classic 60–90 day decay pattern that “looks healthy” until usage slope and headcount tell a different story.

- Feature mix shifts and value gaps: Core feature usage dips while exports or API (application programming interface) errors spike—often a quiet migration signal.

- Orphaned users and sponsor silence: Lost executive coverage and no power-user advocacy accelerate risk even if login counts hold.

- Support spikes with downgrade language: Rising TTR (time to resolution) combined with “downgrade,” “too expensive,” or “alternatives” in tickets surfaces pricing friction pre‑procurement.

- Billing friction sequences: Payment retries + long silences + permission cleanups frequently precede formal offboarding.

By weighting patterns and their order, AI cuts noise and reduces false positives versus generic health scores. The net effect: earlier visibility with higher confidence, so teams act before renewal risk hardens.

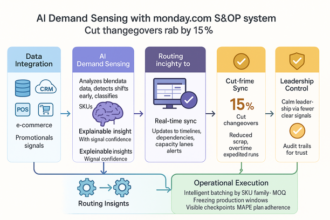

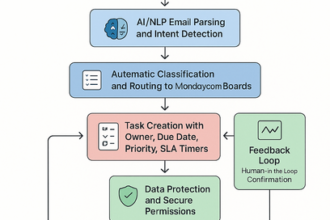

Seamless Integration: Routing AI Risk Alerts into Monday.com for Focused Action

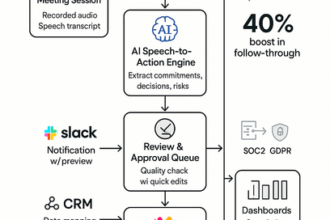

Retention is won when signals become work. Route high-confidence AI alerts into Monday.com as ready-to-act tasks so CSMs and account teams can move quickly and consistently.

- Auto-created risk items with ownership: Each alert becomes a task with a clear owner, SLA (service level agreement), due dates, and the “next best action” playbook.

- Smart prioritization by ARR: Priority levels reflect ARR (annual recurring revenue) impact, ensuring limited time goes to the largest, fixable risks first.

- System-of-record accountability: Statuses and outcomes mirror to your CRM (customer relationship management) for clean rollups and a defensible audit trail.

- Leadership-ready visibility: Earlier risk surfacing sharpens forecast calls and reduces ad‑hoc review cycles.

Want an out‑of‑the‑box way to try this? You can stand up a simple board and automations quickly in Monday.com and refine as your patterns get sharper.

Maximizing Impact: Prioritizing CSM Efforts to Lift Retention by 5–12%

Teams commonly report a 5–12% retention lift when CSM time concentrates on a few fixable risks 60–120 days ahead of renewal. Pattern-based AI clusters those signals and assigns action owners so nothing slips.

- Focus the signal set: Usage contraction in core actions; seat/downgrade plus invoice friction; ticket spikes; sponsor silence.

- Run tiered, two‑week plays: Short, testable interventions with clear exits. Success looks like usage re‑acceleration, executive time booked, or stabilized payment behavior.

- Track SLAs and outcomes: Measure time‑to‑touch, time‑to‑plan, and time‑to‑recovery; roll status into forecast hygiene so leaders skip ad‑hoc reviews.

- Close the executive loop: Turn wins and near‑misses into a playbook library to speed future responses.

Building a Scalable, Low-Overhead Workflow for Predictive Retention Success

Predictive retention works when the workflow is light: data in, patterns scored, alerts routed, outcomes tracked. Done right, teams get earlier signals without babysitting a complex stack.

- Unify key data streams: Product usage, billing events, and support metadata feed a single scoring pass that looks months ahead, not just at lagging indicators.

- Score changes, not snapshots: Emphasize velocity and sequence to suppress noise and surface real drift.

- Route only high-confidence risks: Send alerts with owners into Monday.com; suppress low-signal events to protect focus.

- Close the loop and learn weekly: Log actions and outcomes, retrain models on fresh wins/losses, and keep trimming false positives.

- Deploy fast without heavy lift: Use API (application programming interface) ingest, a lightweight scoring service, and Monday.com automations—no large in‑house build required.

For copy‑paste checklists and field‑tested patterns, browse the Lyaxis newsletter. You’ll find practical signal libraries and an optional Monday.com board template to operationalize them in a day.

Net effect: fewer surprises, tighter forecasts, and time back for your team—because churn follows patterns, and now your process does too.